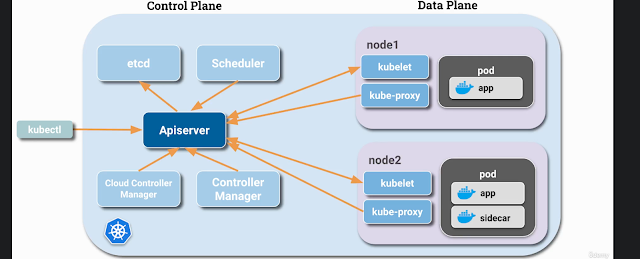

Kubernetes is a popular container orchestration system that provides a powerful platform for managing containerized applications. Containerd is a lightweight, yet powerful container runtime that provides the underlying infrastructure for many Kubernetes deployments. In this, we can see how to set up Kubernetes with Containerd using Ansible over Ubuntu machines.

Environment

- Ubuntu VM's running on Vmware

- K8s with Containerd Runtime

User Creation

- Asks for the User Name which has to be created

- Create's the user

- Adds a dedicated Sudo entry

- Setting up Password less sudo for user

- Copy the local uses ssh key to server for password less auth

- Print the details

- Updates the System

- Steps added for the Package Cleaning as well.

- hosts: all

become: yes

vars_prompt:

- name: "new_user"

prompt: "Account need to be create in remote server."

private: no

tasks:

- name: creating the user {{ new_user }}.

user:

name: "{{ new_user }}"

createhome: yes

shell: /bin/bash

append: yes

state: present

- name: Create a dedicated sudo entry file for the user.

file:

path: "/etc/sudoers.d/{{ new_user }}"

state: touch

mode: '0600'

- name: "Setting up Sudo without Password for user {{ new_user }}."

lineinfile:

dest: "/etc/sudoers.d/{{ new_user }}"

line: '{{ new_user }} ALL=(ALL) NOPASSWD: ALL'

validate: 'visudo -cf %s'

- name: Set authorized key for user copying it from current {{ new_user }} user.

authorized_key:

user: "{{ new_user }}"

state: present

key: "{{ lookup('file', lookup('env','HOME') + '/.ssh/id_rsa.pub') }}"

- name: Print the created user.

shell: id "{{ new_user }}"

register: new_user_created

- debug:

msg: "{{ new_user_created.stdout_lines[0] }}"

- name: Remove Docker packages

apt:

name: docker-ce docker-ce-cli containerd.io

state: absent

purge: yes

- name: Remove Docker directories

file:

path: "{{ item }}"

state: absent

with_items:

- /etc/docker

- /var/lib/docker

- /var/run/docker.sock

- name: Remove containerd packages

apt:

name: containerd

state: absent

purge: yes

- name: Remove containerd directories

file:

path: "{{ item }}"

state: absent

with_items:

- /etc/containerd

- /var/lib/containerd

- name: "Update cache & Full system update"

apt:

update_cache: true

cache_valid_time: 3600

force_apt_get: true

Package Installation in Master and Worker Nodes

- Copy the local host files to all the server for name resolution

- update the hostnames of the machines based on the names in host file

- Temporary Swap off

- Swap off in fstab

- Create a empty file for containerd module.

- Configure module for containerd.

- Create a empty file for kubernetes sysctl params.

- Configure sysctl params for Kubernetes.

- Apply sysctl params without reboot

- Installing Prerequisites for Kubernetes

- Add Docker’s official GPG key

- Add Docker Repository

- Install containerd.

- Configure containerd.

- Configure containerd.

- Creating containerd Config file

- Enable containerd service, and start it.

- Add Google official GPG key

- Add Kubernetes Repository

- Installing Kubernetes Cluster Packages.

- Enable service kubelet, and enable persistently

- Reboot all the Kubernetes nodes.

- hosts: "master, workers"

remote_user: ansible

become: yes

become_method: sudo

become_user: root

gather_facts: yes

connection: ssh

tasks:

- name: Copying the host file

copy:

src: /etc/hosts

dest: /etc/hosts

owner: root

group: root

- name: "Updating hostnames"

hostname:

name: "{{ new_hostname }}"

- name: Make the Swap inactive

command: swapoff -a

- name: Remove Swap entry from /etc/fstab.

lineinfile:

dest: /etc/fstab

regexp: swap

state: absent

- name: Create a empty file for containerd module.

copy:

content: ""

dest: /etc/modules-load.d/containerd.conf

force: no

- name: Configure module for containerd.

blockinfile:

path: /etc/modules-load.d/containerd.conf

block: |

overlay

br_netfilter

- name: Create a empty file for kubernetes sysctl params.

copy:

content: ""

dest: /etc/sysctl.d/99-kubernetes-cri.conf

force: no

- name: Configure sysctl params for Kubernetes.

lineinfile:

path: /etc/sysctl.d/99-kubernetes-cri.conf

line: "{{ item }}"

with_items:

- 'net.bridge.bridge-nf-call-iptables = 1'

- 'net.ipv4.ip_forward = 1'

- 'net.bridge.bridge-nf-call-ip6tables = 1'

- name: Apply sysctl params without reboot.

command: sysctl --system

- name: Installing Prerequisites for Kubernetes

apt:

name:

- apt-transport-https

- ca-certificates

- curl

- gnupg-agent

- vim

- software-properties-common

state: present

- name: Add Docker’s official GPG key

apt_key:

url: https://download.docker.com/linux/ubuntu/gpg

state: present

- name: Add Docker Repository

apt_repository:

repo: deb [arch=amd64] https://download.docker.com/linux/ubuntu bionic stable

state: present

filename: docker

update_cache: yes

- name: "Update cache & Full system update"

apt:

update_cache: true

upgrade: dist

cache_valid_time: 3600

force_apt_get: true

- name: Install containerd.

apt:

name:

- containerd.io

state: present

- name: Configure containerd.

file:

path: /etc/containerd

state: directory

- name: Enable containerd service, and start it.

systemd:

name: containerd

state: restarted

enabled: yes

daemon-reload: yes

- name: Add Google official GPG key

apt_key:

url: https://packages.cloud.google.com/apt/doc/apt-key.gpg

state: present

- name: Add Kubernetes Repository

apt_repository:

repo: deb http://apt.kubernetes.io/ kubernetes-xenial main

state: present

filename: kubernetes

mode: 0600

- name: "Update cache & Full system update"

apt:

update_cache: true

upgrade: dist

cache_valid_time: 3600

force_apt_get: true

- name: Installing Kubernetes Cluster Packages.

apt:

name:

- kubeadm

- kubectl

- kubelet

state: present

- name: Enable service kubelet, and enable persistently

service:

name: kubelet

enabled: yes

- name: Reboot all the kubernetes nodes.

reboot:

msg: "Reboot initiated by Ansible"

connect_timeout: 5

reboot_timeout: 3600

pre_reboot_delay: 0

post_reboot_delay: 30

test_command: whoami

Master Configuration

- Pulls all needed images

- Reset Kubeadm if its already configured

- Initialize K8s cluster

- Create Directory for Kube config file in master

- Create a local kube config file in master

- Copy the kube config file to ansible local server

- Genarates the Kube toke for workers and stores it

- Copy the token to master's tmp directory

- Copy the toke to ansible local tmp direcotry

- Initialize the pod network with fannel

- Copy the output to mater file

- Copy the output to ansible local server

- hosts: master

remote_user: ansible

become: yes

become_method: sudo

become_user: root

gather_facts: yes

connection: ssh

tasks:

- name: Pulling images required for setting up a Kubernetes cluster

shell: kubeadm config images pull

- name: Resetting kubeadm

shell: kubeadm reset -f

register: output

- name: Initializing Kubernetes cluster

shell: kubeadm init --apiserver-advertise-address=$(ip a |grep ens160| grep 'inet ' | awk '{print $2}' | cut -f1 -d'/') --pod-network-cidr 10.244.0.0/16 --v=5

register: myshell_output

- debug: msg="{{ myshell_output.stdout }}"

- name: Create .kube to home directory of master server

file:

path: $HOME/.kube

state: directory

mode: 0755

- name: Copy admin.conf to user's kube config to master server

copy:

src: /etc/kubernetes/admin.conf

dest: $HOME/.kube/config

remote_src: yes

- name: Copy admin.conf to user's kube config to ansible local server

become: yes

become_method: sudo

become_user: root

fetch:

src: /etc/kubernetes/admin.conf

dest: /Users/rahulraj/.kube/config

flat: yes

- name: Get the token for joining the nodes with Kuberentes master.

shell: kubeadm token create --print-join-command

register: kubernetes_join_command

- debug:

msg: "{{ kubernetes_join_command.stdout_lines }}"

- name: Copy K8s Join command to file in master

copy:

content: "{{ kubernetes_join_command.stdout_lines[0] }}"

dest: "/tmp/kubernetes_join_command"

- name: Copy join command from master to local ansible server

fetch:

src: "/tmp/kubernetes_join_command"

dest: "/tmp/kubernetes_join_command"

flat: yes

- name: Install Pod network

shell: kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

register: myshell_output

- name: Copy the output to master file

copy:

content: "{{ myshell_output.stdout }}"

dest: "/tmp/pod_network_setup.txt"

- name: Copy network output from master to local ansible server

fetch:

src: "/tmp/pod_network_setup.txt"

dest: "/tmp/pod_network_setup.txt"

flat: yes

Worker Configuration

- Copy the token from ansible local file to worker nodes

- Reset the kubeadm

- Join the Worker node to Master by running the command

- hosts: workers

remote_user: ansible

become: yes

become_method: sudo

become_user: root

gather_facts: yes

connection: ssh

tasks:

- name: Copy token to worker nodes.

become: yes

become_method: sudo

become_user: root

copy:

src: /tmp/kubernetes_join_command

dest: /tmp/kubernetes_join_command

mode: 0777

- name: Resetting kubeadm

shell: kubeadm reset -f

register: output

- name: Join the Worker nodes with the master.

become: yes

become_method: sudo

become_user: root

command: sh /tmp/kubernetes_join_command

register: joined_or_not

- debug:

msg: "{{ joined_or_not.stdout }}"

K8s should be up with the worker nodes now.