Installing Cgroups.

yum install libcgroupstarting Cgroup rule creator

/etc/init.d/cgconfig startChecking the subsystem which are mounted in the kernel

lssubsys -amns

perf_event

net_prio

cpuset /cgroup/cpuset

cpu /cgroup/cpu

cpuacct /cgroup/cpuacct

memory /cgroup/memory

devices /cgroup/devices

freezer /cgroup/freezer

net_cls /cgroup/net_cls

blkio /cgroup/blkio

Basic subsystems are

cpuset assigns individual CPUs and memory nodes to cgroup tasks

cpu schedules CPU access (for example, according to relative shares, as in Figure 1, or for real-time processes)

cpuacct reports total CPU time used.

memory reports or limits memory use.

devices grants or denies access to devices.

freezer suspends or resumes tasks.

net_cls tags outgoing network packets with an identifier.

blkio reports or controls I/O bandwidth for block devices.

Checking which all subsystem are mounted using thereown filesystem

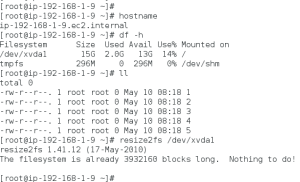

[root@ip-192-168-1-129 ~]#

ls -al /cgroup/total 8

drwxr-xr-x. 10 root root 4096 May 8 07:38 .

dr-xr-xr-x. 25 root root 4096 May 8 07:27 ..

drwxr-xr-x. 5 root root 0 May 8 08:31 blkio

drwxr-xr-x. 2 root root 0 May 8 08:31 cpu

drwxr-xr-x. 2 root root 0 May 8 08:31 cpuacct

drwxr-xr-x. 2 root root 0 May 8 08:31 cpuset

drwxr-xr-x. 2 root root 0 May 8 08:31 devices

drwxr-xr-x. 2 root root 0 May 8 08:31 freezer

drwxr-xr-x. 2 root root 0 May 8 08:31 memory

drwxr-xr-x. 2 root root 0 May 8 08:31 net_cls

[root@ip-192-168-1-129 ~]#

if its not mounted manually mount it.

here following are not mounted so we can mount it.

ns

perf_event

net_prio

# mkdir /cgroup/ns # ll /cgroup/ns/total 0

# mount -t cgroup -o ns ns /cgroup/ns # ll /cgroup/ns/total 0

--w--w--w-. 1 root root 0 May 8 08:48 cgroup.event_control

-rw-r--r--. 1 root root 0 May 8 08:48 cgroup.procs

-rw-r--r--. 1 root root 0 May 8 08:48 notify_on_release

-rw-r--r--. 1 root root 0 May 8 08:48 release_agent

-rw-r--r--. 1 root root 0 May 8 08:48 tasks

Creating Cgroups under blkio for settting the different IO rate

[root@ip-192-168-1-129 cgroup]# cgcreate -g blkio:high_io [root@ip-192-168-1-129 cgroup]# cgcreate -g blkio:low_io [root@ip-192-168-1-129 cgroup]# cgcreate -g blkio:avg_ioCgroup are created

==================

[root@ip-192-168-1-129 cgroup]# ll /cgroup/blkio/|grep drwxdrwxr-xr-x. 2 root root 0 May 8 09:39 avg_io

drwxr-xr-x. 2 root root 0 May 8 09:38 high_io

drwxr-xr-x. 2 root root 0 May 8 09:39 low_io

[root@ip-192-168-1-129 cgroup]#

Files inside are created automatically

[root@ip-192-168-1-129 cgroup]# ll /cgroup/blkio/avg_io/total 0

-r--r--r--. 1 root root 0 May 8 09:39 blkio.io_merged

-r--r--r--. 1 root root 0 May 8 09:39 blkio.io_queued

-r--r--r--. 1 root root 0 May 8 09:39 blkio.io_service_bytes

-r--r--r--. 1 root root 0 May 8 09:39 blkio.io_serviced

-r--r--r--. 1 root root 0 May 8 09:39 blkio.io_service_time

-r--r--r--. 1 root root 0 May 8 09:39 blkio.io_wait_time

--w--w----. 1 root root 0 May 8 09:39 blkio.reset_stats

-r--r--r--. 1 root root 0 May 8 09:39 blkio.sectors

-r--r--r--. 1 root root 0 May 8 09:39 blkio.throttle.io_service_bytes

-r--r--r--. 1 root root 0 May 8 09:39 blkio.throttle.io_serviced

-rw-rw-r--. 1 root root 0 May 8 09:39 blkio.throttle.read_bps_device

-rw-rw-r--. 1 root root 0 May 8 09:39 blkio.throttle.read_iops_device

-rw-rw-r--. 1 root root 0 May 8 09:39 blkio.throttle.write_bps_device

-rw-rw-r--. 1 root root 0 May 8 09:39 blkio.throttle.write_iops_device

-r--r--r--. 1 root root 0 May 8 09:39 blkio.time

-rw-rw-r--. 1 root root 0 May 8 09:39 blkio.weight

-rw-rw-r--. 1 root root 0 May 8 09:39 blkio.weight_device

--w--w----. 1 root root 0 May 8 09:39 cgroup.event_control

-rw-rw-r--. 1 root root 0 May 8 09:39 cgroup.procs

-rw-rw-r--. 1 root root 0 May 8 09:39 notify_on_release

-rw-rw-r--. 1 root root 0 May 8 09:39 tasks

[root@ip-192-168-1-129 cgroup]#

Getting Current weight-age of the sub system

cgget -r blkio.weight high_ioSetting required weight-age for the sub system

cgset -r blkio.weight=1000 high_ioAssign processes to a cgroup.

Using Pid of process

cgclassify -g blkio:high_io 1407This has the same effect as putting PID 1410 into the tasks file:

echo 1410 >> /cgroup/blkio/high_io/tasksAny process subsequently launched in the shell is automatically assigned to the shell's cgroup.

Alternatively, the cgexec command can launch a process in the specified cgroup:

[root@ip-192-168-1-129 ~]#

cgexec -g blkio:high_io httpdhttpd: apr_sockaddr_info_get() failed for ip-192-168-1-129.ec2.internal

httpd: Could not reliably determine the server's fully qualified domain name, using 127.0.0.1 for ServerName

[root@ip-192-168-1-129 ~]#

[root@ip-192-168-1-129 ~]#

[root@ip-192-168-1-129 ~]# pidof httpd

1521 1520 1519 1518 1517 1516 1515 1514 1513

[root@ip-192-168-1-129 ~]# cat /cgroup/blkio/high_io/tasks

1513

1514

1515

1516

1517

1518

1519

1520

1521

[root@ip-192-168-1-129 ~]#

For services that have a configuration file in /etc/sysconfig, you can edit the configuration file to allocate the service to a cgroup automatically. For example, add this line to

/etc/sysconfig/httpd:CGROUP_DAEMON="blkio:high_io"Then start the service to automatically execute the processes in the specified cgroup:

===============

The changes made above will be erased on server reboot so to make the rules permanent we need to add the rule in the configuration.

Capturing Parameters

Up to this point, everything I've done on the command line won't persist across reboots. Once I've set up hierarchies, attached cgroups, and defined parameters the way I want them, I can capture an existing configuration with cgsnapshot:

#

cgsnapshot -s > cgconfig-example.confnow check the configuration at /etc/cgconfig.conf and do needed changes as of cgconfig-example.conf .

To clear all the setting we can use

[root@ip-192-168-1-129 ~]# cgclear [root@ip-192-168-1-129 ~]# lscgroupcgroups can't be listed: Cgroup is not mounted

[root@ip-192-168-1-129 ~]#